This is the second in a series of blog posts on the topic of evaluation in the context of the ABE program. Read Part 1 of the evaluation series, How Does ABE Approach Evaluation?

You’ve spent countless hours planning, developing, and facilitating a professional learning course for teachers. Participants seemed satisfied with the program, but as they walk out the door, you’re left wondering: Did they think it was useful? What questions or concerns do they still have? How can we improve the training next time? Evaluating your professional learning program provides valuable information to help address these questions and support continuous improvement. Additionally, evaluation of professional learning activities offers evidence linking a core element of ABE—professional learning conducted by ABE sites—with teacher outcomes outlined in the program’s logic model. In this blog post, we’ll discuss how the ABE Program Office has approached evaluating professional learning in the past, what we’ve learned so far, and what individual ABE sites might explore going forward.

ABE program sites conduct professional development institutes (PDIs) to prepare teachers to implement ABE in their classrooms. These PDIs typically range in length from 1 to 5 days and can include training for both new and returning ABE teachers. The overall goals for ABE professional learning are to:

- Develop teachers’ understanding of ABE lab techniques and concepts

- Be context-appropriate and accessible to different teacher experience levels

- Promote teacher comfort, confidence, and self-efficacy

- Support local alignment to required content standards

- Enhance classroom facilitation skills

- Strengthen the ABE teacher community

- Build teachers’ value and ownership for the curriculum

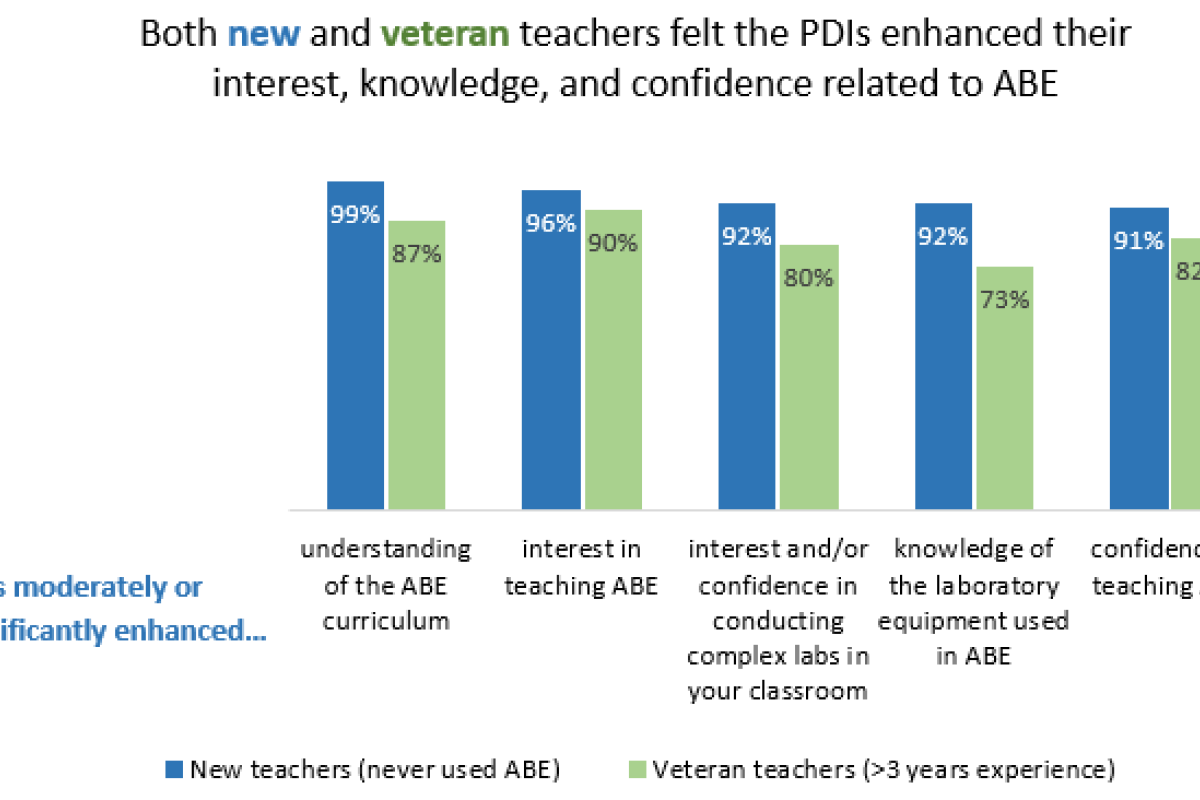

In 2017, the Program Office developed a PDI survey for participants to complete following their PDI experiences. The purpose of this survey was to help individual sites understand what was working well and what could be improved with their PDIs, and to inform ABE’s overall approach to professional learning. Program Office evaluators provided summary reports to the respective ABE sites following each PDI, which were used by sites to inform changes to future PDIs. The survey collected information and feedback that was useful in understanding more about the teachers attending PDIs (e.g., grades and courses taught); specific elements of the PDIs (e.g., whether the length of the PDI was appropriate); participants’ feedback about the quality of their PDI experiences, how they benefited from it, and how it could be improved; and teachers’ initial thoughts about implementing ABE with their students.

Based on these survey results, we know that teachers were generally enthusiastic about their PDI experiences and the program as a whole. Nearly all teachers felt the PDIs were high quality and enhanced their knowledge. However, the challenge with any survey is that it only captures a snapshot of how someone feels at a specific time. In the case of ABE, there is often a lag between when teachers are trained and when they implement ABE in their classrooms. Additionally, without having yet done the labs with their students, teachers are unable to fully know what their needs and challenges will be. Although the survey is valuable for understanding participants’ experiences at the PDI, more information is needed to fully understand teachers’ experiences implementing ABE in their classrooms and how to best prepare and support them.

To this end, as ABE continues to grow and expand, the Program Office is shifting our approach to evaluating professional learning. A one-size-fits-all approach to evaluation is no longer useful. Program sites design professional learning based on their own program contexts and teacher needs, and are likely to have different questions they’re looking to answer when evaluating their programs. Additionally, when thinking about the PDI goals mentioned earlier, the Professional Learning working group confirmed that a more individualized, site-led evaluation would provide more useful and actionable information. For these reasons, evaluation of professional learning has become de-centralized to give ABE sites the freedom to explore the questions that will be most useful to them. For example, sites can explore:

- Teacher feedback on their PDI experiences

- Changes in teacher content knowledge and lab skills

- Teacher confidence implementing ABE

- Teacher interest in conducting ABE again and/or implementing additional labs

- The extent to which teachers felt prepared to implement ABE, including teachers’ feedback on their successes and challenges

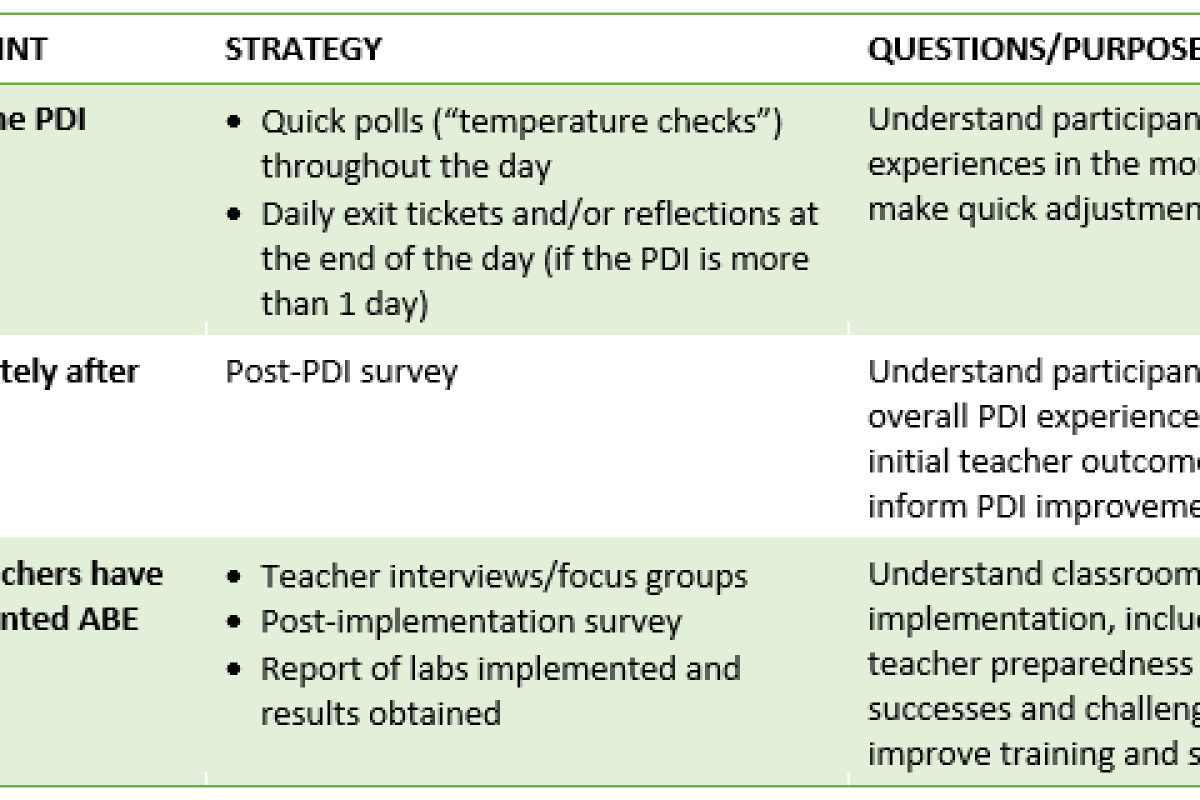

So, what are some approaches sites can take when evaluating their own professional learning? The table below shows some possible strategies, many of which are already in use by some ABE sites.

As ABE grows and expands, we look forward to learning together about how to effectively implement teacher professional learning to continuously improve as a global ABE community.